The corner of your eye

20 May 2016We usually think that our eyes work like a camera, giving us a sharp, colorful picture of the world all the way from left to right and top to bottom. But we actually only get this kind of detail in a tiny window right where our eyes are pointed. If you hold your thumb out at arm’s length, the width of your thumbnail is about the size of your most precise central (also called “foveal”) vision. Outside of that narrow spotlight, both color perception and sharpness drop off rapidly - doing high-precision tasks like reading a word is almost impossible unless you’re looking right at it.

The rest of your visual field is your “peripheral” vision, which has only imprecise information about shape, location, and color. Out here in the corner of your eye you can’t be sure of much, which is used as a constant source of fear and uncertainty in horror movies and the occult:

What’s that in the mirror, or the corner of your eye?

What’s that footstep following, but never passing by?

Perhaps they’re all just waiting, perhaps when we’re all dead,

Out they’ll come a-slithering from underneath the bed….

Doctor Who “Listen”

What does this peripheral information get used for during visual processing? It was shown over a decade ago (by one of my current mentors, Uri Hasson) that flashing pictures in your central and peripheral vision activate different brain regions. The hypothesis is that peripheral information gets used for tasks like determining where you are, learning the layout of the room around you, and planning where to look next. But this experimental setup is pretty unrealistic. In real life we have related information coming into both central and peripheral vision at the same time, which is constantly changing and depends on where we decide to look. Can we track how visual information flows through the brain during natural viewing?

Today a new paper from me and my PhD advisors (Fei-Fei Li and Diane Beck) is out in the Journal of Vision: Pinpointing the peripheral bias in neural scene-processing networks during natural viewing (open access). I looked at fMRI data (collected and shared generously by Mike Arcaro,Sabine Kastner, Janice Chen, and Asieh Zadbood) while people were watching clips from movies and TV shows. They were free to move their eyes around and watch as you normally would, except that they were inside a huge superconducting magnet rather than on the couch (and had less popcorn). We can disentangle central and peripheral information by tracking how these streams flow out of their initial processing centers in visual cortex to regions performing more complicated functions like object recognition and navigation.

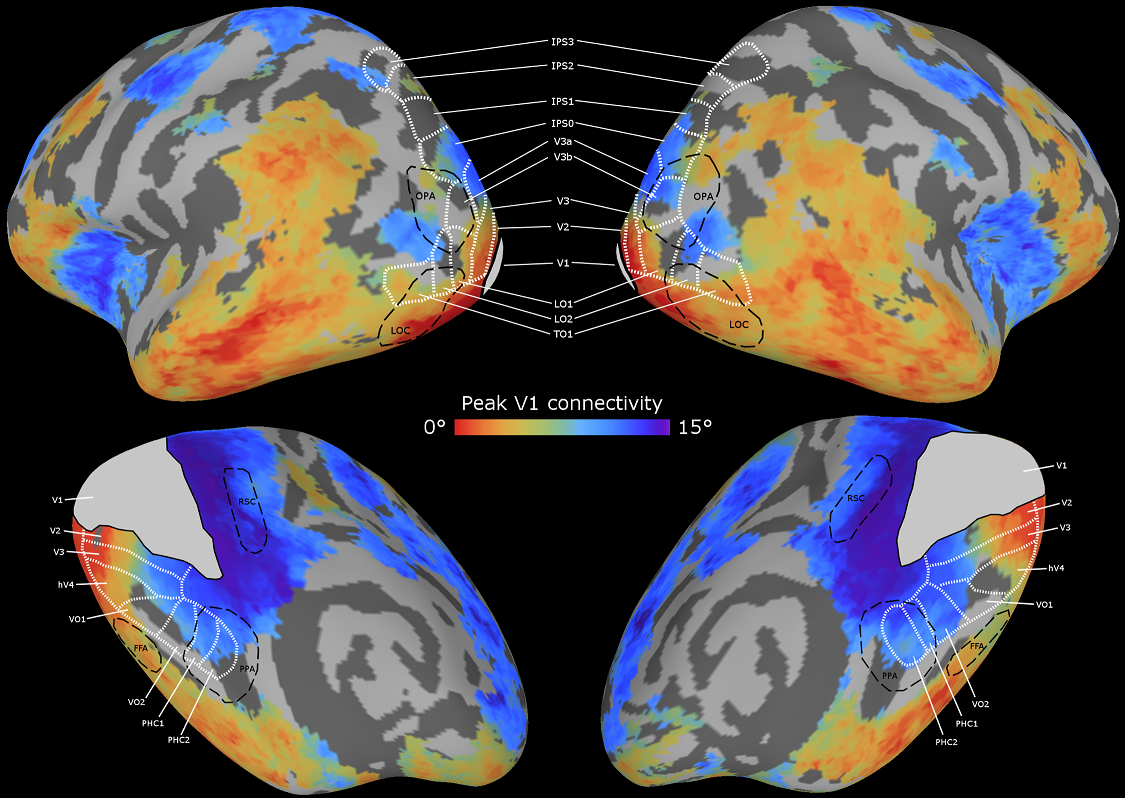

We can make maps that show where foveal information ends up (colored orange/red) and where peripheral information ends up (colored blue/purple). I’m showing this on an “inflated” brain surface where we’ve smoothed out all the wrinkles to make it easier to look at:

This roughly matches what we had previously seen with the simpler experiments: central information heads to regions for recognizing objects, letters, and faces, while peripheral information gets used by areas that process environments and big landmarks. But it also reveals some finer structure we didn’t know about before. Some scene processing regions care more about the “near” periphery just outside the fovea and still have access to relatively high-resolution information, while others draw information from the “far” periphery that only provides coarse information about your current location. There are also detectable foveal vs. peripheral differences in the frontal lobe of the brain, which is pretty surprising, since this part of the brain is supposed to be performing abstract reasoning and planning that shouldn’t be all that related to where the information is coming from.

This paper was my first foray into the fun world of movie-watching data, which I’ve become obsessed with during my postdoc. Contrary to the what everyone’s parents told them, watching TV doesn’t turn off your brain - you use almost every part of your brain to understand and follow along with the story, and answering questions about videos is such a challenging problem that even the latest computer AIs are pretty terrible at it (though some of my former labmates have started making them better). We’re finding that movies drive much stronger and more complex activity patterns compared to the usual paradigm of flashing individual images, and we’re starting to answer questions raised by cognitive scientists in the 1970s about how complicated situations are understood and remembered - stay tuned!

Comments? Complaints? Contact me @ChrisBaldassano